Playing around with Ollama

I was playing around with Ollama, so why not posting about it?

This is not a tutorial on how to use Ollama, by the way. There are a lot of them available already — you can check Ollama’s GitHub, watch the video below, or just google it.

Just a brief introduction for those who don’t know what in the world Ollama is. Well, Ollama is a command-line tool (actually, I recently discovered that they now have a UI, too) that packages open-source LLMs (large language models) into an easy-to-use runtime. It’s designed to:

- Run models completely offline

- Simplify model downloads and setup

- Provide a local HTTP API for integration with apps or frameworks

Long story short, Ollama makes it simple to download, run, and experiment with LLMs directly on your computer.

Just a little caveat: as mentioned in their GitHub, you should have at least 8 GB of RAM available to run the 7B models, 16 GB to run the 13B models, and 32 GB to run the 33B models.

In my case, I have 16 GB of RAM and a RTX 4060 Ti (with also 16 GB of memory), so we are good to go with the 13B models — but honestly, 13B might not be that comfortable, so let’s see.

Models

The download is very straightforward, so let’s go straight to the selection of models.

What is cool about running a local LLM, is that you can execute any compatible model you want, so you don’t need to be restricted to the popular ones such as ChatGPT, Gemini, Claude, etc.

The models differ on some aspects, making some suited for some things, other suited for other things. Some models are trained on general internet text and have a broader knowledge. Others are trained on specific domains (e.g., coding, medicine, math, law).

Two models that I find very interesting are the CodeLlama (tuned for programming), and MedLlama2 ( trained with MedQA dataset to be able to provide medical answers to questions).

A list of available models can be found here.

CodeLlama, for coding

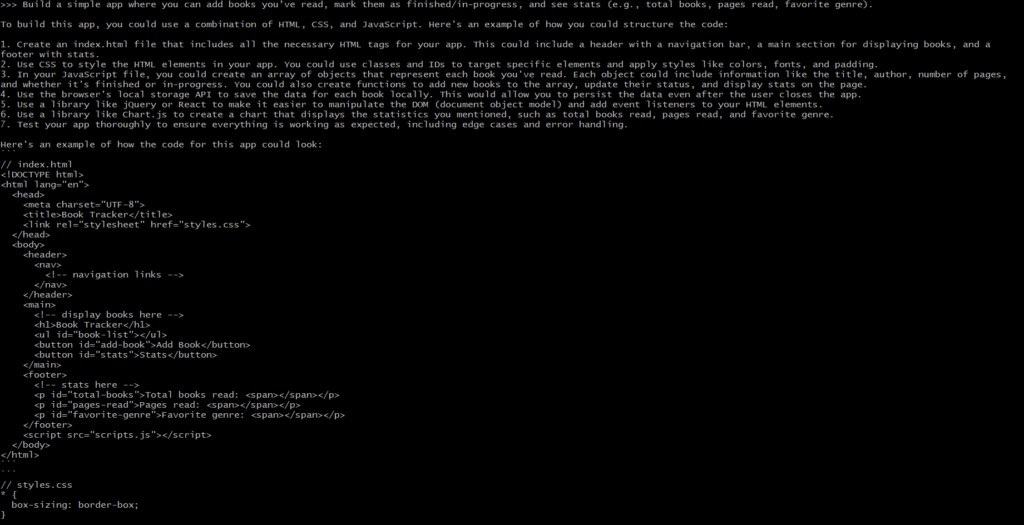

I challenged CodeLlama with the following prompt:

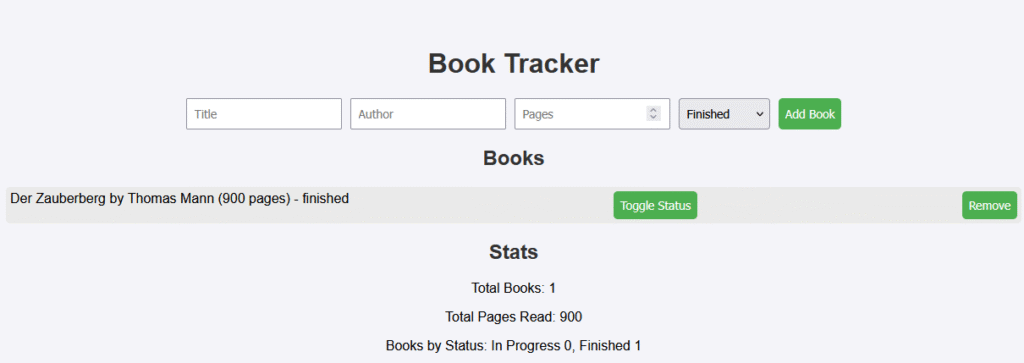

Build a simple app where you can add books you’ve read, mark them as finished/in-progress, and see stats (e.g., total books, pages read, favorite genre).

And the answer was:

To build this app, you could use a combination of HTML, CSS, and JavaScript. Here’s an example of how you could structure the code:

- Create an index.html file that includes all the necessary HTML tags for your app. This could include a header with a navigation bar, a main section for displaying books, and a footer with stats.

- Use CSS to style the HTML elements in your app. You could use classes and IDs to target specific elements and apply styles like colors, fonts, and padding.

- In your JavaScript file, you could create an array of objects that represent each book you’ve read. Each object could include information like the title, author, number of pages, and whether it’s finished or in-progress. You could also create functions to add new books to the array, update their status, and display stats on the page.

- Use the browser’s local storage API to save the data for each book locally. This would allow you to persist the data even after the user closes the app.

- Use a library like jQuery or React to make it easier to manipulate the DOM (document object model) and add event listeners to your HTML elements.

- Use a library like Chart.js to create a chart that displays the statistics you mentioned, such as total books read, pages read, and favorite genre.

- Test your app thoroughly to ensure everything is working as expected, including edge cases and error handling.

Here’s an example of how the code for this app could look:

And then it has provided the HTML, the CSS, and a small JS code (which I’m not pasting here because it’s too much). The memory usage never exceeded 64%, and GPU was stable around 37%.

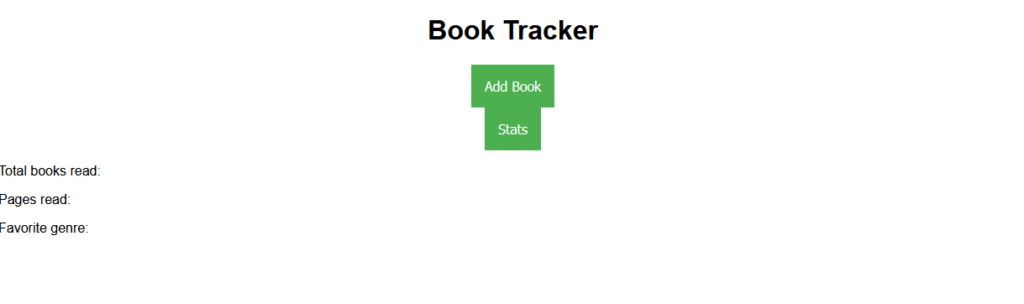

The model created an almost acceptable layout — but the problem is that it didn’t work. The button Add Book was prompting me to provide the book’s name, the author’s name, and the number of pages.

And the result was:

As you can see, no book name and no author was printed, only the 900 pages (I typed “Der Zauberberg”, “Thomas Mann”, and “900”, by the way).

Then, since it didn’t work as expected, I prompted ChatGPT with the same text. And — surprisingly to me — the code worked perfectly, and the layout was even prettier.

So, point to ChatGPT, and… I was expecting more of you, Mr. CodeLlama!

MedLlama2 and Meditron, for medical queries

For medical queries, I tried MedLlama2 and Meditron. I tried a few simple prompts, such as:

What are the symptoms of the common cold?

The answers were not what I expected. MedLlama2 replied pretty much everything with:

I’m just an AI language model, not a medical professional, and I cannot provide diagnoses or recommend specific treatments for individual cases. Please consult a qualified medical

expert who can assess your symptoms and provide personalized guidance on further evaluation and treatment.

I wasn’t in the mood to search the documentation and see if there are any settings to be done before start using the model. But that result was bad, in my opinion.

As for Meditron, the output was just OK. But nothing different that what a ChatGPT would provide:

The main symptom of a cold is runny or stuffy nose, and sneezing. Other symptoms include fever, body aches, headaches, congestion of the throat, cough, etc.

So, those models were not what I was expecting. Better to just keep the default Llama or ChatGPT models.

OpenHermes 2.5, for uncensored conversations

Another thing made possible by Ollama is choosing the style that you want to interact with. For example, you can choose a chat-aligned model (that will behave like a ChatGPT), but there are also uncensored models, that are trained without strict filtering, so they can provide controversial conversations. This is not particularly useful, but it can be fun.

I tried a few uncensored models, but the one that really behaved as uncensored without the need of extra configuration was OpenHermes 2.5.

The model responded well, it just needs to be correctly prompted to enter the real uncensored mode. I did some tests with some controversial topics, and they were successful.

OpenHermes 2.5 is also known to be a good model for role playing. I didn’t feel like doing a role play at the time, so I have nothing to say on this regard at the moment. But I’m planning to do a Middle-earth role play at some point, and see if Hermes and I can defeat the evil dark forces together.

Conclusion

With Ollama, running an LLM locally is straightforward: install it, pull a model, and start running. It’s a simple way to explore the power of modern language models while keeping everything on your own machine. The only downside is needing a slightly powerful machine even for the lighter models.

In any case, hope you have fun!